In-depth analysis of SEO crawling: how to use crawling to improve website rankings

Synopsis

Google is the world's largest online search engine and has become an essential tool for consumers when shopping online. Google's powerful search functionality attracts billions of searches from users around the world every day.

For businesses with a standalone website, search engine optimisation (SEO) can be used to boost natural Google traffic, in addition to paid promotions to drive traffic. The right SEO strategy can significantly improve the visibility of your website, but it requires deep expertise and skill. For the less experienced, there can be many difficulties and challenges in practice. This article will explain in detail how to use Proxies tools to optimise SEO content and help businesses better cope with these challenges to boost their website's natural traffic and rankings.

What is SEO?

SEO (Search Engine Optimization) is the process of optimising a website to improve its rankings in search engine results pages (SERPs) to attract more traffic.

Next, I'll show you where Google search results come from.

[align=center” target=_blank>[img” target=_blank>https://b352e8a0.cloudflare-imgbed-b69.pages.dev/file/7acacac1c3a87594c9e9e.png” align=”center” />

These results are natural search results, and Google crawls the world's websites as much as possible with their crawler bots.

Google will through the design of a set of algorithms, to the user's intent to search for keywords, they believe that the most able to solve the search intent of the results presented to the user, and the order of the search results is not because you have to pay to Google to let the rankings ahead of any kind of payment to Google to let your natural search rankings ahead of the way.

Google will design an algorithm to display results that they believe best address the intent of the user's keyword search. And the order of the search results will not be elevated because you pay Google, nor is there any way to pay to rank higher for natural searches.

SEO optimises a website appropriately according to the natural ranking laws of search engines to significantly improve its ranking on search engines such as Google. With traffic generated by user-initiated searches, SEO provides more accurate and direct access. Best of all, SEO is free.

SEO is usually divided inIn-depth analysis of SEO crawling: how to use crawling to improve website rankingso two forms: on-site SEO and off-site SEO:

On-site SEO: mainly involves the construction of website content, including original soft text and keyword layout. Original content and reasonable keyword layout can not only improve the quality of the website, but also accelerate the speed of optimisation ranking.

Off-site SEO: refers to the optimisation carried out outside the website, through external means to promote the website, so as to bring more traffic and revenue. Common off-site SEO means include adding external links or exchanging friendly links.

When using SEO, one needs to first understand how search engines accurately crawl web pages and then optimise them for ranking based on specific keywords or search results. By optimising the relevance of the web pages, we can increase the number of visitors to the independent site and further increase the profitability of the independent site.

Why is SEO important?

Quite simply, when your website ranks higher, you attract more quality traffic and these visitors are more likely to convert into your potential customers. When people search for information, products or services that they are interested in or need, most of them will only click on the first few results on the search results page. Therefore, if you can get your website to rank at the top, you have a better chance of attracting those potential customers.

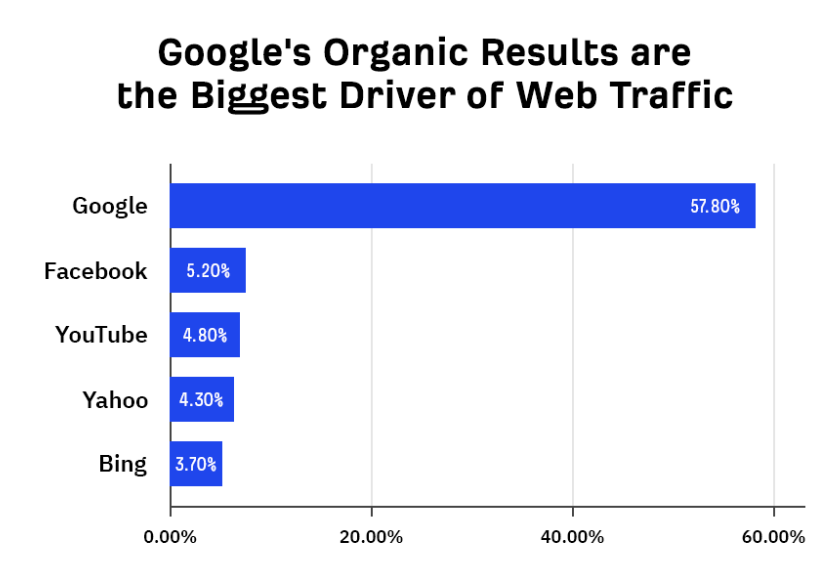

In fact, the majority of website traffic comes from Google's natural search results, with natural search traffic even outweighing all other traffic sources combined.SEO is important because it improves your website's ranking in Google, which in turn increases natural search traffic.

Cost-effective

SEO is a virtually consumable form of promotion with no additional cost other than labour costs. Natural search engine ranking clicks are free, and as the number of clicks increases, so does the page rank.

Increase traffic without additional budget

Unlike SEM bidding adverts, SEO does not require the addition of extra budgetary costs to get more traffic. By consistently providing high-quality content, SEO can work like a snowball to bring in more and more natural traffic.

Traffic still exists after SEO services are discontinued

Unlike SEM bidding ads, where rankings and traffic immediately disappear when you stop advertising, SEO continues to see traffic even when you stop optimising, and may even perform better over time. Therefore, SEO is an option with long-term benefits.

More trust in SEO rankings

SEO has a higher level of trust than SEM, which is clearly labelled as promotion and has a lower level of user trust, while SEO's natural ranking reflects the strength of the website. Websites that frequently appear at the top of search results can establish the image of industry experts and gradually win the trust of users.

SEO helps to build a company's brand image

A company's word of mouth has a significant impact on user choice. With SEO, you can ensure that positive news is presented when searching for information about your company or products, improving your company's online reputation and preventing the loss of potential customers due to negative information.

SEO optimised rankings are more attractive for users to click on

When searching for services, users are more inclined to click on websites that naturally rank high. These sites usually have a better user experience and show the strength of the company. The content in advertised positions, on the other hand, tends to be of lower quality, making it difficult to win users' trust.

Long-lasting natural ranking results

SEO optimisation takes a certain period of time, which is the period of assessment for new sites by all search engines. However, once the rankings improve, it will be there for a long time. As long as the site continues to operate, the rankings and traffic will continue to be stable.

What is Crawling in SEO

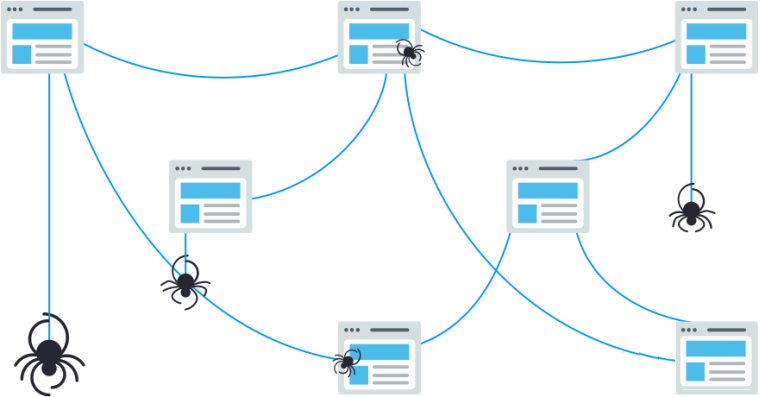

Crawling in SEO refers to the use of robots (also known as crawlers or spiders) by search engines to automatically browse and collect information about web pages on the Internet. These crawlers travel from site to site, collecting page information and storing it in the search engine's index. The index is like a giant library from which a librarian can find a book (web page) to help users find exactly what they are looking for. When a user enters a query into a search engine, the search engine quickly finds relevant pages in the index and presents them to the user.

Crawling is only one part of SEO, search engines also use complex algorithms to analyse the pages in their indexes, taking into account hundreds of ranking factors to determine the order of pages in the search results. Key ranking factors include content relevance, page authority, user experience and content freshness. The relevance of the page content to the keywords of the user's query, the authority of the page and the website, page loading speed, mobile device compatibility, page layout, navigation, and the timing of content updates all affect the ranking of a page. Therefore, by consistently providing high-quality content, optimising the structure of your website and improving the user experience, you can increase the authority and relevance of your pages and thus achieve higher rankings.

Webmasters can control and optimise the crawling process in a number of ways to ensure that search engines are able to crawl and index their site content efficiently. By configuring robots.txt file, you can specify which pages are allowed to be crawled and which pages are forbidden to be crawled; submitting a site map (XML Sitemap) can help search engines better understand the structure of the website and crawl important pages more efficiently; optimising the internal linking structure of the website to ensure that all the important pages can be easily accessed through the links on the other pages; and ensuring the server is fast response time, to avoid the server problems caused by the crawl failure. Crawling is one of the key steps in SEO, and by optimising the crawling process and understanding the ranking algorithms, it can help webmasters to improve the visibility and ranking of their websites in search engines, thus attracting more traffic.

How can I improve my website's search engine optimisation?

Advantages of automated SEO tasks

Automating SEO tasks is essential for productivity. Manually collecting data, such as analysing competitors, can be a time-consuming and tedious task. However, automating SEO tasks requires making a large number of requests, which can run into hundreds or even thousands of Google requests per day. Performing this from your own IP address will quickly lead to capture and blocking.Proxies can change the IP address that appears to you on a website, enabling you to quickly automate the collection of large amounts of data, whether from search engines or competitors, so that they are unable to block you or identify you.

Choosing the Right Residential Proxies

To make the most of automated SEO, choosing the right Proxies is crucial. Companies can choose between Data Centre Proxies IPs, Residential Proxies IPs, or a combination of both. Residential Proxies, whilst more costly, have a higher IP reputation, are less likely to be captured and support localised search queries for a wider range of locations as they are sourced from real users' devices. If you decide to go with Residential Proxies, make sure they meet the necessary criteria, such as a large pool of quality IPs from landline or mobile ISPs and extensive geographic coverage and the ability to select locations.

Residential Proxies in SEO

Residential Proxies have multiple applications in SEO. Firstly, they can mimic different home networks to avoid being identified and blocked by search engines, especially when performing large-scale data crawls. Secondly, Residential Proxies are able to view region-specific search results to understand geo-specific search trends and keyword performance to optimise localised content. In addition, the use of Residential Proxies reduces the risk of being blocked by spreading out requests and preventing IP blocking issues caused by frequent requests. At the same time, Residential Proxies are able to hide real IP addresses, increasing the anonymity and security of SEO operations, and protecting SEO strategies from being easily discovered by competitors.

Improve the effectiveness of your SEO strategy

Using Residential Proxies also allows you to simulate real user behaviour to test and validate the effectiveness of SEO strategies. This includes Click-Through Rate Testing, User Experience Testing, etc., in order to better optimise the content and structure of the Web. Through these means, SEO researchers are able to collect comprehensive data from multiple geographic locations to ensure accuracy and completeness, leading to more precise and effective SEO strategies.

Choosing the right Residential Proxies is a crucial step towards successful SEO optimisation. It is recommended to use a high quality Residential Proxies service such as PROXY.CC for SEO optimisation. They have large pools of quality IPs from landline or mobile ISPs, extensive geographic coverage, and the ability to select locations to ensure accurate data is collected from local search pages. With these advantages, Residential Proxies are able to significantly improve the efficiency and effectiveness of their SEO efforts.

If you want to know more about PROXY.CC, you can click the link to check it out and experience the powerful features of PROXY.CC. You can also get 500MB of free traffic for the first registration by contacting customer service.PROXY.CC Residential Proxies Tutorials

We purchased a Residential Proxies for PROXY.CC and obtained the IP address, port, username and password of the Proxy Service from the Proxy Service Provider.

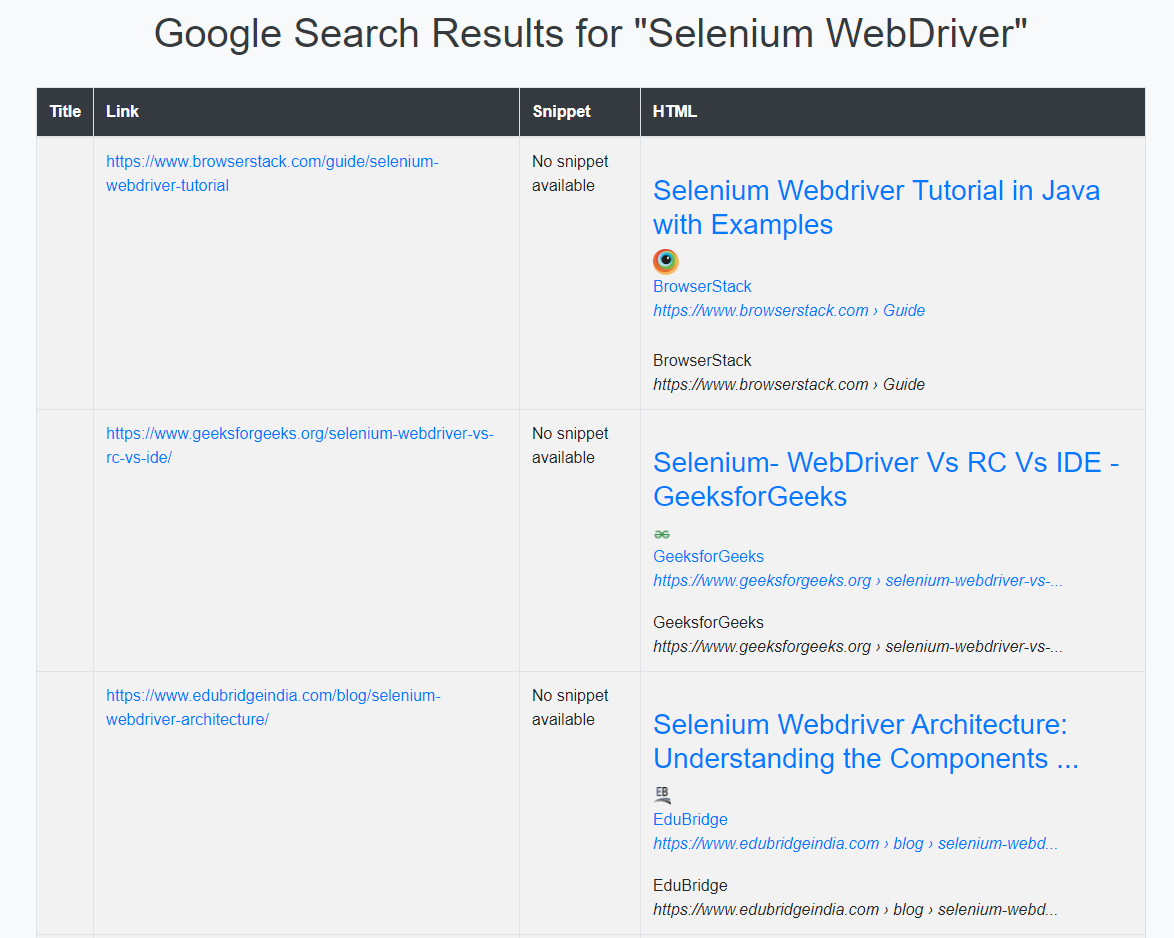

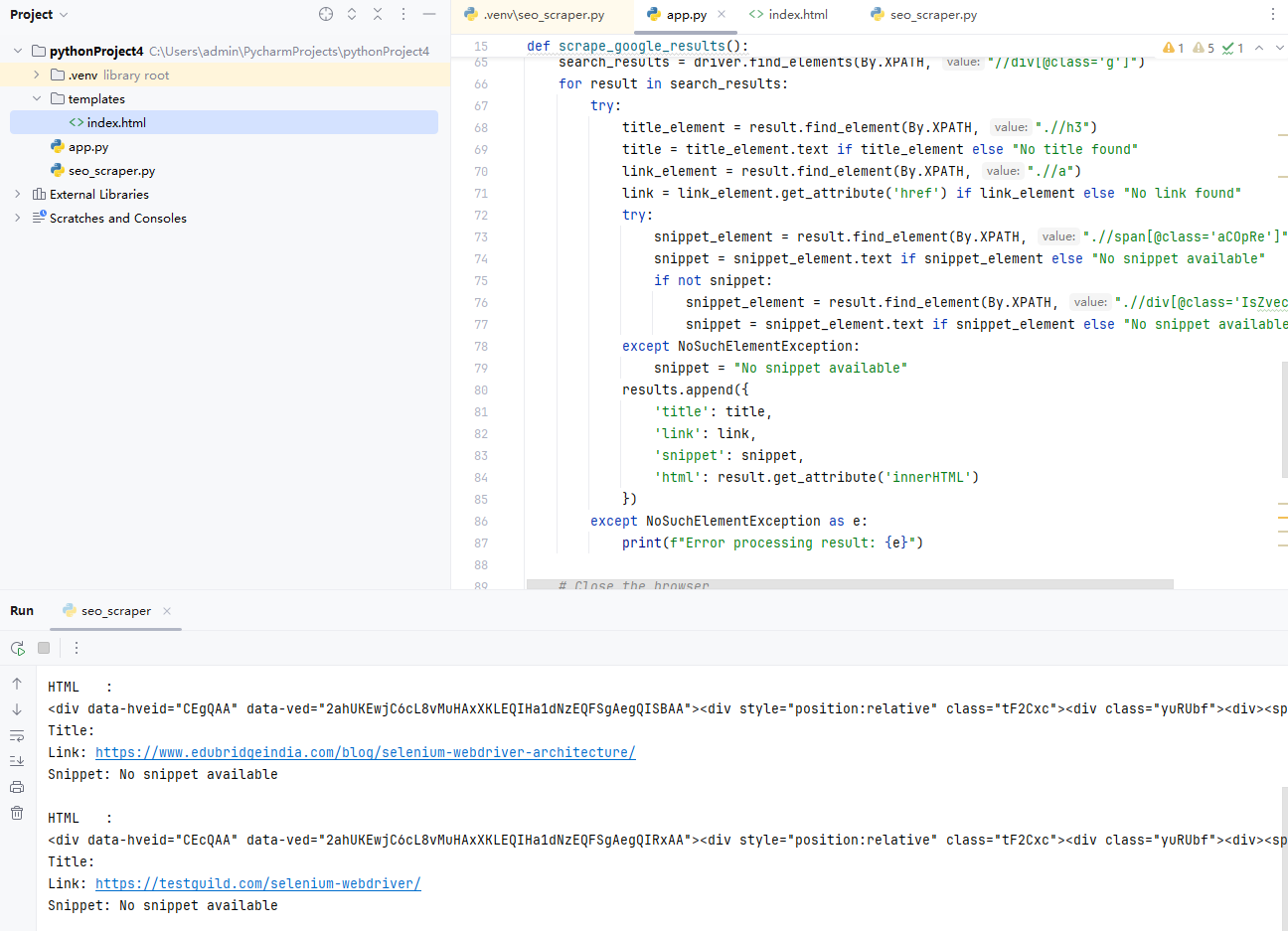

We created a web crawler application using the Python programming language and the PyCharm Integrated Development Environment (Porfiles). The main plugins and libraries include Selenium, Flask, and Bootstrap.With these tools, we implemented the web crawler and web presentation functionality in PyCharm.

To implement a web crawler, we used the Selenium library, which allows us to automate search operations and Web Scraping of Google search results in the browser. In order to access Google, we set up a Proxies in the United States with the following information: eu.gw.proxy.cc:4512, username pcc-A12345678_area-US_life-5, and password A[i” target=_blank>**[/i” target=_blank>. With the Proxies, we are able to simulate web access from different regions, increasing the diversity of data crawling.

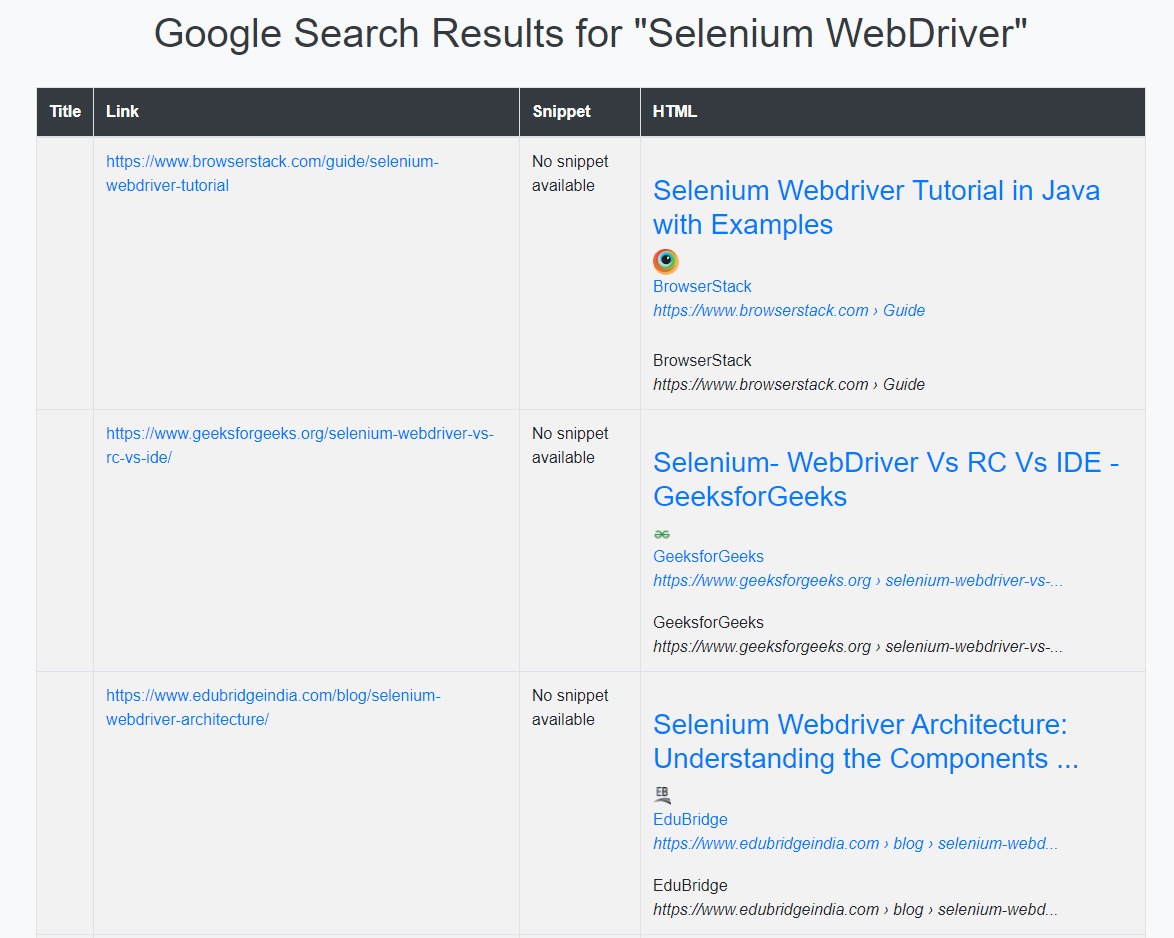

In SEO, crawlers help us to automatically crawl and analyse search engine results to understand the ranking and performance of specific keywords. In this project, we use Flask framework to display the crawling results on the web page and use Bootstrap to beautify the results to make it more beautiful and easy to read. The keyword we used is "Selenium WebDriver".

Project structure and code

Project structure

[code” target=_blank>[code” target=_blank>pythonProject4/

|- .venv/

||– Lib/

|– Scripts/

|– app.py

|– templates/

|– index.html

|– seo_scraper.py

The result after running is as follows

Download ChromeDriv

er: Make sure you have downloaded ChromeDriver for your version of Chrome and added its path to your system Porfiles. If not, download it from the ChromeDriver website.

Steps in PyCharm

[list=1″ target=_blank>

[*” target=_blank>Create a new project: visit PyCharm, download PyCharm, install and open PyCharm, and create a new Python project.

[*” target=_blank>Create a new Python file: create a new Python file in the project, such as [code” target=_blank>seo_scraper.py[/code” target=_blank>.

[*” target=_blank>Copy and paste code: copy and paste the above code into the [code” target=_blank>seo_scraper.py[/code” target=_blank> file.

[*” target=_blank>Configure Proxies information: replace [code” target=_blank>your_proxy_ip:port[/code” target=_blank>, [code” target=_blank>your_proxy_username[/code” target=_blank> and [code” target=_blank>your_proxy_password[/code” target=_blank> in the code with your Proxies information.

[*” target=_blank>Install the necessary libraries: open Pycharm's terminal, activate the Porfiles and install [code” target=_blank>pip install flask selenium[/code” target=_blank> the following libraries

[*” target=_blank>Configure Proxies and Keywords:

Open [code” target=_blank>seo_scraper.py[/code” target=_blank> and configure Proxies and keywords in the code:[code” target=_blank>[code” target=_blank>proxy_ip_port=”eu.gw.proxy.cc:4512″ # change to your Proxies IP and port

proxy_user=”pcc-A12345678_area-US_life-5″ # change to your Proxies username

proxy_password=”Aa12345678″ # change to your Proxies password

search_box.send_keys(“Selenium WebDriver”) # change to the keywords you want to search for

[*” target=_blank>Writing a Flask app and Selenium crawler in [code” target=_blank>app.py[/code” target=_blank>: code example

[*” target=_blank>Run the app: run [code” target=_blank>app.py[/code” target=_blank> and the Flask server will start and automatically open [code” target=_blank>http://127.0.0.1:5000/[/code” target=_blank> in your browser to display the crawled Google search results.

This is a crawl showing the results of a search for the keyword "Selenium WebDriver" in the United States.

” align=”center” />

” align=”center” />

What are SEO Articles? What are the factors that affect search engine rankings?

SEO article writing is more than just putting together keywords and relevant content; it involves a series of meticulous optimisation strategies to ensure that the article stands out in the search engines. Firstly, choosing and optimising keywords is a basic but important step. For example, when writing an article on "e-commerce trends", in addition to the main keyword "e-commerce trends" in the title and paragraphs, you need to uncover relevant long-tail keywords such as "latest trends in e-commerce in 2024" or "latest trends in e-commerce" or "latest trends in e-commerce". The latest trends in e-commerce" or "how to optimise your e-commerce platform with artificial intelligence". This refined keyword strategy not only increases the reach of the article, but also attracts users who are looking for specific information, which increases click-through rates and page rankings.

Secondly, in-depth content analysis and user needs fulfilment are key to improving SEO results. For example, when writing an article on "SEO optimisation tips", it should not only introduce basic SEO strategies, such as keyword density and meta tag optimisation, but also include specific operational guidelines and the latest algorithmic changes, such as how to deal with Google's core updates, how to optimise semantic search, etc. Detailed operational steps and practical advice can make the article a reference for users. Detailed operational steps and practical advice can make the article a reference for users and improve the authority and stickiness of the page. By introducing charts, case studies and specific data support, the depth and value of the article can be enhanced.

Internal and external linking strategies play a significant role in improving the search ranking of your articles. Internal linking not only enhances the internal structure of your website, but also improves the relevance of each page. For example, linking to a page on your website about "content marketing strategy" in a "digital marketing" article can help search engines better understand the structure of your website and increase the weight of related pages. At the same time, external links should point to high-authority websites or academic resources, such as citing reports from well-known market research organisations or the views of authoritative experts, which not only enhances the credibility of the article, but also helps to improve the article's search engine ranking.

Finally, technical optimisation factors such as page load speed and mobile device compatibility are key to improving user experience and SEO performance. Page load speed directly affects user retention and search engine rankings. If an article's page takes too long to load, users may be lost and search engines will lower their rankings. Therefore, measures such as optimising image sizes, using content delivery networks (CDNs) and enabling browser caching are needed to improve loading speed. At the same time, with the popularity of mobile device use, it is vital to ensure that articles display well on all devices. By designing responsively and testing compatibility across different devices, you can ensure that users have a quality reading experience regardless of the device they use to access the article, which will directly improve the SEO performance of the page.

What else can Residential Proxies do?

Residential Proxies such as PROXY.CC can play an important role in SEO optimisation. By utilising Rotating Residential Proxies, businesses can avoid being blocked by search engines due to frequent IP requests, thus ensuring that SEO tools and data crawlers continue to function. This Proxies service not only masks real IPs, but also simulates user visits from different regions, increasing the diversity and reliability of data collection and effectively improving keyword rankings and page performance. For example, by simulating IP addresses from across the globe, users are able to obtain more accurate geo-location data to optimise localised search strategies.

Additionally, PROXY.CC's unlimited traffic Proxies option is particularly beneficial for SEO analyses that require large-scale data crawling. This Proxies service avoids interruptions due to traffic limitations and ensures the continuity and integrity of data capture. For tasks such as optimising competitor analysis, keyword research and market trend forecasting, unlimited Proxies can significantly increase data processing power and efficiency, resulting in more precise and effective SEO strategies.

Conclusion

SEO (Search Engine Optimisation) is an important strategy for improving website visibility and attracting quality traffic. Through the effective use of crawling techniques, businesses can optimise the content and structure of their websites to achieve higher rankings in search engine results pages (SERPs).

Choosing the right Residential Proxies such as PROXY.CC can significantly improve the efficiency and effectiveness of your SEO efforts, ensure diversity and accuracy in data crawling, and protect your business's SEO strategy from being easily discovered by competitors. Automating SEO tasks and utilising a high-quality content strategy are key to successful SEO optimisation.

By consistently providing high-quality content, optimising the structure of the website, enhancing the user experience and using Residential Proxies to simulate real user behaviour, businesses can develop a more precise and effective SEO strategy to ensure that they stand out in a competitive marketplace. Ultimately, successful SEO optimisation will lead to long-term traffic growth and increased brand trust, leading to greater commercial success!